To answer the question, I went back to the past to examine how we think, how we view the world, and how we make decisions.

Introduction

In November 2020 while walking along Kalaloch Beach in coastal Washington State, I encountered some oddly shaped rocks high up in the intertidal zone. During the drive home, I wondered how they got there, and later decided to spend some time researching the subject. The result was “My Mysterious Case of Beach Shingles,” the first post on Science for Everybody. The answer to my question was a bit of a journey that lead me into a better understanding of a world I took for granted.

A year later and another car ride home from somewhere, I started wondering what a scientist actually is. Is the title reserved for a select few, or available to everyone? I decided to explore the concept in a new article. I figured it would be an easy one to write, with a straightforward statement about what a scientist actually was, and a clean, tidy conclusion regarding who was included and who was not.

I was wrong.

My first step was to look up the definition of a scientist. Merriam-Webster says it’s a scientific investigator. Wikipedia, not much better, says it’s someone who conducts scientific research. The Science Council took it a bit further:

“A scientist is someone who systematically gathers and uses research and evidence, to make hypotheses and test them, to gain and share understanding and knowledge.”

Other definitions were similar and generally not very helpful. It turned out that determining whether everyone is a scientist (or not) is a bit like pondering whether everyone is an artist, or a musician, or an athlete. If you create a strict definition to measure everyone against, only a select few are allowed into the club, potentially excluding and alienating a large group of people doing science-like things. If the definition is more expansive and squishier, pretty much everyone is included, thereby insulting those who have spent many years and thousands of dollars earning advanced degrees in science.

It would have been easier to ask whether everyone is a brain surgeon.

I noticed that at least a few definitions mentioned the term “scientific method,” so I explored what this method really is, and how versions of it have been used throughout our history. Going way back, I found numerous books and articles that speculated about how early humans might have interacted with their environment and each other to solve problems and improve their situations. I wondered if this behavior might have been a precursor to the organized way of thinking we use today to evaluate everything from a nonfunctioning toaster to the interactions of subatomic particles.

Even though the term “scientist” has only been around since 1834, history suggests our ancestors were for millennia doing what we would call “science” without formal training, fancy college degrees, or sophisticated equipment. Approximately 2,400 years ago, a fellow named Democritus created an atomic theory of tiny particles always in motion, and believed in a universe populated with an infinite number of worlds governed by natural law. About 100 years later, Euclid wrote a book called Elements that described mathematical theory, and another called Optics that explained the behavior of light using geometric principles. These examples reminded me that “scientists” have been amongst us for a long time, and have influenced how we think, what we do, and how we view both the ancient and modern world.

Is everyone a scientist?

It’s time to find out.

The Basics of the Scientific Method

Everyone reading this article was probably taught the scientific method in school, then promptly forgot about it. Even scientists that routinely use it sometimes stumble over the meaning. One of the most succinct explanations comes from our friends at the Khan Academy, which I have modified a bit to explain how a scientist might deal with a potentially defective toaster:

- Make an Observation: the toaster is not working

- Ask a Question: why is the toaster not working?

- Propose a Hypothesis: the toaster is fine, the electrical outlet is defective

- Make a Prediction Based on the Hypothesis: if I plug the toaster into a different outlet, it will work

- Test the Prediction: plug the toaster into a different outlet and see what happens: If the toaster works, the hypothesis is supported; if is doesn’t, the hypothesis is rejected

- Iterate (reflect on results): toast means the hypothesis is supported, so something must be wrong with the original outlet; no toast means the hypothesis is rejected, so there must be something wrong with the toaster

The result in Step 6 is the launching point for a new set of hypotheses and actions that explore why the original electrical outlet doesn’t work or what exactly is wrong with the toaster. Most of us would probably just avoid using the outlet or throw the toaster away and get a new one. Old retired guys like me would probably try to fix the problem, leading to either an electrical fire or a trip to the store for a new toaster, or both.

This example reveals that toaster users, electricians, plumbers, auto mechanics, lawn mower repairmen, biologists, chemists, physicists, doctors, and old retired guys all use some form of the scientific method to explore ideas and make sense of things they observe. We’re all basically using the same system to solve problems — we just call it different names and use it in different ways.

As far as we know, this process of thinking is primarily a human thing, even though there are examples of other animals using their cognitive ability to do some impressive things, something the folks working at a public aquarium deal with on a daily basis if they have an octopus on display. Humans, however, have been able to take it to an advanced level, allowing us to literally explore everything from the stars to the microbes beneath our feet. Why are we so good at using it, and when did we start? There are many competing hypotheses concerning this, as you can imagine, but most of them seem to focus on some common traits that humans possess that help the process work.

Let’s explore.

Things Humans Do

Science tells us that human beings have been walking the earth for a very long time. We started out as just one of many species, and likely lived in small groups as hunter-gatherers, ever on the lookout for food (or to avoid becoming food). Compared to other top-level predators, we were scrawny and weak, but we had a few advantages over our animal “friends” that enabled us to level the playing field, so to speak, including

- A big brain

- A long lifespan

- The ability to communicate complex thoughts orally, and later, in writing

- Manipulators, as in hands with dexterous fingers and opposable thumbs.

We could also walk and run in an upright position, making it easier to cover long distances and to free up our hands for carrying stuff, like children and spears. One could argue these advantages enabled us to dramatically change the planet for better or worse, and one would be right.

The arc of our history is filled with countless discoveries, innovations, failures, and adaptations as we explored our world. As described in Yuval Noah Harari’s book Sapiens, scientists estimate that about 300,000 years ago, we made regular use of fire, and began constructing spears, axes, knives, and bows. We experienced a “cognitive revolution” roughly 30,000 to 70,000 years ago, when our ability to communicate using language reached levels never seen before. During that time, we were able to talk about things we had never seen or touched, create stories, and express ideas. The “agricultural revolution” began around 10,000 years ago when we gradually transitioned from hunting and gathering to a more sedentary farming lifestyle that led to communal living and eventually cities.

The formal “scientific revolution” representing a drastic change in scientific thought started only 500 years ago, and our technological progress has skyrocketed ever since.

It’s important to note that our distant ancestors were not brainless brutes. Historical evidence suggests that Neanderthals, for example, might have been symbolic thinkers, indicated by cave paintings dating back 65,000 years. There is also evidence they buried their dead in a ritualistic manner.

Prehistoric “geniuses” may have been our first inventors, discovering something innovative and important, like spears and knives and wheels, and passing along the knowledge to others. Many of our “hardwired” traits also were useful when we began to develop language, writing, art, music, and science.

As noted in the book “Everyone’s a Scientist (But Experts are Rare…)”, humans in general are very good at:

- Being curious about our world

- Gathering data

- Testing assumptions

- Keeping records

- Gaining and sharing knowledge, and

- Making predictions for the future.

Curiosity led us to explore and invent. Data gathering helped us locate food sources and understand where food might be at certain times of the year. Testing assumptions was a part of a trial-and-error way of figuring things out — sometimes the hard way. Record keeping, first in oral form and later in writing, made it possible to store and share knowledge that everyone could use to make predictions or otherwise enrich their lives. All of these traits were important parts of learning and growing intellectually, and all of them are elements of what we now call the scientific method.

What follows are examples of how some of us have applied our hard-wired attributes to the scientific method to make important contributions to the world.

Three Examples of People Who Changed the World

Choosing only three examples of people who have changed the world via science and technology is fraught with peril, because there are so many choices, ranging (alphabetically) from Aristotle to James Watt. I settled on four people who have made remarkable contributions using scientific tools and methodologies that are, in many ways, accessible to all of us — especially if you occasionally daydream.

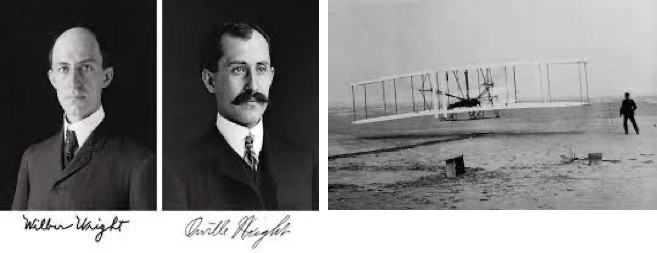

The Wright Brothers

In the first few years of 1900, when people of the horse and buggy era were still getting used to sharing the road with noisy, dangerous internal combustion automobiles, the very idea of air travel was often thought impossible. Early attempts at flight were inherently dangerous, and routinely ended with injury or death. Despite this, two brothers living in Dayton, Ohio, decided to give it a try.

At first glance, the resumes of Orville and Wilbur Wright looked pretty thin. Orville was a high school dropout, and Wilbur, injured as a youth in a sporting accident, became a bit of a homebound recluse for several years, availing himself to his father’s extensive library to learn about the world. The brothers worked as printers and publishers for a while, and later opened a bicycle repair shop in 1892 called the Wright Cycle Exchange. During their printing and publishing days, they read about experiments in aeronautics — mostly going on in Europe — and mourned the death of Otto Lilienthal, a German pioneer of flight and one of their heroes. Lilienthal’s death spurred them to get serious about flying. And that’s exactly what they did.

The brothers read everything they could get their hands on about flying, especially as to how to control an aircraft in flight, a critical problem that caused many of the crashes. Then they did what no other inventor of that time had bothered to do: they spent an enormous amount of time watching birds, and more specifically, how birds controlled their flight. Wilbur took copious notes, which later were used in publications and lectures. Based on the bird studies, he invented a concept called “wing warping” to control a plane’s lateral roll and incorporated it into their design. In 1900, he wrote:

The brothers started with kites and gliders, and in 1900, relocated their testing grounds to a lonely, windy spot at Kitty Hawk, North Carolina. There they experimented with different kinds of aircraft design, eventually creating large gliders that could support one pilot. They crashed a lot into the sand dunes and learned from every mishap. Other inventors were busy as well, and many had access to lots of expertise and resources, including wealthy sponsors footing the bill. The Wright Brothers had only the money they earned from their bicycle shop, their testing location at Kitty Hawk, and each other.

For the most part, the work of the brothers was ignored by the aviation “experts” within and outside of the United States. But over time, it became obvious even to their competitors that the Wright Brothers were for real, despite their humble beginnings. At scientific conferences discussing aeronautical advances, attendees were in awe of the knowledge Wilbur Wright possessed, even as they tried to beat him into the sky.

You all know the story: on December 17, 1903, the Wright Flyer, piloted by Orville, became the first motorized airplane to fly. It wasn’t a long flight (120 feet and 12 seconds), but it proved the concept. The rest, as they say, really is history.

Years ago, on a trip to Washington D.C., I visited the National Air and Space Museum and saw the 1903 Wright Flyer — the world’s first airplane. It made me smile, because I knew the story. I thought about their humble beginnings, their lack of professional credentials, and their independent, stubborn, studious research. They used their own version of the scientific method to create the first motorized airplane in history.

Not bad for two bicycle repairmen.

Albert Einstein

One of the most famous and impactful scientists in the world didn’t enjoy an easy transition from obscurity to international fame. Albert Einstein struggled at times academically, primarily because he didn’t bother to show up for class, and one of his instructors called him “a lazy dog.” But the time spent away from class was not wasted; instead, he spent hours working through physics problems on his own – an activity he continued throughout his life.

After graduation from university, he was not immediately offered an academic position as he had hoped. Instead, he took a job as a junior patent clerk in Bern, Switzerland, reading the ideas of others. The work was easy for him, enabling him to spend hours on his own theories, ideas, and daydreams, culminating in his “miracle year” in 1905 when he published four scientific papers that revolutionized science. A few months later, he produced a fifth paper that stated energy and matter were interchangeable at the atomic level, and E=MC2 was born. Many of his predictions about time and space could not be verified until decades after his death, when our technology finally caught up to his ideas. He made a few mistakes, too, and ended up on the wrong side of the quantum physics revolution, because he could not accept “God playing dice with the universe.”

Einstein’s approach to physics epitomizes how a human can think and create in innovative ways to confront cosmological questions even when hampered with technology and equipment that is primitive or nonexistent. There was no sophisticated laboratory equipment or supercomputers in 1905 that Einstein could use to test his ideas. What Einstein did have was an abundance of curiosity and imagination, and he used both to work through complicated questions and challenges in physics. He is known for creating “thought experiments” to help him visualize an idea and work through the potential solutions. For example, at the age of 16, he wondered what it would be like to chase a beam of light and the implications if he caught it. This led to his theory of special relativity, a concept that showed the connection between space and time.

Einstein worked through physics problems in his office, as he played his violin, or while sailing his small boat. He was a genius, but frequently got lost on his way home from work. His way of thinking and his creative process was best summarized during an interview with the Saturday Evening Post in 1929 when he said:

“I believe in intuitions and inspirations. I sometimes feel that I am right. I do not know that I am… [but] I would have been surprised if I had been wrong. I am enough of the artist to draw freely upon my imagination. Imagination is more important than knowledge. Knowledge is limited. Imagination encircles the world.”

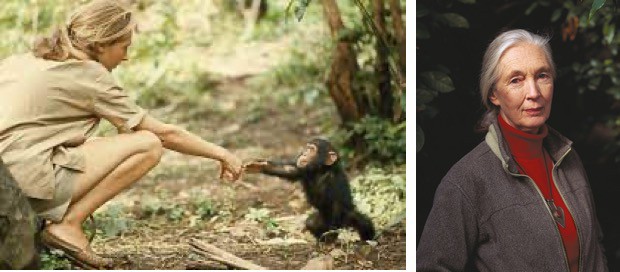

Jane Goodall

Jane Goodall is arguably one of the most famous and recognizable of our living scientists. Born in 1934, she is 88 old years as I write this and still going strong. Her journey resonates with many young women born in her era, and she serves as an example for those considering a career in science today. She was inspired to go to Africa at a young age after reading The Story of Doctor Doolittle by Hugh Lofting. She couldn’t afford to go to college, and instead went to secretarial school and worked at Oxford University typing and filing papers.

A trip to Kenya in 1952 to visit a school friend changed the trajectory of her life and, subsequently, our view of primates and the ancient world. Returning to Africa in 1956, she met Dr. Louis Leakey, a famed anthropologist studying early human origins. He hired her first as a secretary, then supported her involvement in his research and later her formal education. Leakey believed studying primate behavior would shed light on early human societies, and he saw Jane’s lack of scientific training as an advantage as she would not be biased by traditional thought and orthodoxy and could conduct the research with an open mind.

Leakey chose well: in her first year of work with chimpanzees in Tanzania’s Gombe National Park, she discovered that chimps made and used tools and were omnivorous rather than vegetarian — behaviors never before observed in nature. She also discovered that although chimps were capable of waging war, they also exhibited strong mother-infant bonds and compassion. Her research transformed science’s view of primate behavior, and provided insights into how prehistoric human communities might have functioned.

Her approach was considered unorthodox by scientists of that era in that she immersed herself in the chimpanzee world with “a mind uncluttered by academia.” She gave individuals human names rather than numbers, and closely observed and imitated their behaviors by living and working in their neighborhood. In Gombe National Park, it took two years for the chimpanzee community she studied to accept her. Her research tools consisted of a pair of binoculars, a pencil, and a notebook. Although she received a PhD in ethology (the study of animal behavior) in 1966, it was never her primary concern. She once told BBC radio

After graduation, she continued her research at Gombe for an additional 20 years. Summarizing her work, Louis Leakey once said:

“Now we must redefine ‘tool’, redefine ‘man’, or accept chimpanzees as humans.”

In 1977, the Jane Goodall Institute was founded to promote global wildlife and environmental conservation.

Final Thoughts

Is everyone a scientist? I would say yes, but acknowledge it’s complicated.

A lot depends on how we choose to define the term, the emphasis we put on formal training and academics, and the way we view the past and present world.

It’s easy to become preoccupied with terms, definitions, titles, and academic or scholastic metrics that end up partitioning people into separate groups that rarely interact. Now, more than ever, we truly need to build bridges rather than walls, and connect people from all walks of life by demystifying what scientists do and recognizing the natural abilities we all have in common. We should also embrace and appreciate the experts in all fields of study and endeavors that help us along our way.

We all have the capacity to be curious, to observe, to reason, to plan, to predict, to test, and to learn from our mistakes. And we’ve been doing it as a species for a very long time.

In the end, I think Dr. Edward Brooke got it right: Everyone’s a Scientist, But Experts are Rare…

Information Sources

Introduction

How the Word “Scientist’ Came to Be, National Public Radio, May 212, 2010, https://www.npr.org/templates/story/story.php?storyId=127037417

Famous Scientists, The Art of Genius, Famousscientists.org, https://www.famousscientists.org/top-scientists-in-antiquity/.

Ultraprecise Atomic Clock Experiments Confirm Einstein’s Predictions About Time, Adam Mann, Livescience, February 25, 2022, https://www.livescience.com/atomic-clock-confirms-einstein-predictions-about-time.

The Basics of the Scientific Method

The Scientific Method, The Khan Academy, https://www.khanacademy.org/science/biology/intro-to-biology/science-of-biology/a/the-science-of-biology.

Theory vs. Hypothesis: Basics of the Scientific Method, MasterClass Articles, November 8, 2020, https://www.masterclass.com/articles/theory-vs-hypothesis-basics-of-the-scientific-method#what-is-a-hypothesis.

Animal Cognition, Animalcognition.org, https://www.animalcognition.org/tag/problem-solving/.

Seagulls, Songbirds and Parrots: What New Research Tells Us About Their Cognitive Ability, Claudia Wascher, The Scotsman, January 1, 2022, https://www.scotsman.com/news/opinion/columnists/what-new-research-tells-us-about-birds-cognitive-ability-claudia-wascher-3511025.

Are Octopuses Smart?, The mischievous mollusk that flooded a Santa Monica Aquarium is not the first MENSA-worthy octopus, Bremndan Borrell, Scientific American, February 27, 2009, https://www.scientificamerican.com/article/are-octopuses-smart/.

Things Humans Do

Sapiens- A Brief History of Humankind, Yuval Noah Harari, https://www.ynharari.com/book/sapiens-2/

Many Early Human Species Existed on Earth?, Benjamin Plackett, January 24, 2021, https://www.livescience.com/how-many-human-species.html.

Were Other Humans the First Victims of the Sixth Mass Extinction?, The Conversation, November 21, 2019, https://theconversation.com/were-other-humans-the-first-victims-of-the-sixth-mass-extinction-126638.

Scientific Revolution, Brittanica, https://www.britannica.com/science/Scientific-Revolution

Why Won’t the Old Caveman Stereotypes for Neanderthals Die? Barbara J. King, National Public Radio Opinion, March 5, 2018, https://www.npr.org/sections/13.7/2018/03/05/589858179/why-won-t-the-old-caveman-stereotypes-die

How a Handful of Prehistoric Geniuses Launched Humanity’s Technological Revolution, Nicholas R. Longrich, The Conversation, December 29, 2021, https://theconversation.com/how-a-handful-of-prehistoric-geniuses-launched-humanitys-technological-revolution-171511.

Everyone’s a Scientist (But Experts are Rare…), Dr. Edward Brooke, https://www.everyonesascientist.com

The Wright Brothers

The Wright Brothers, David McCullough, https://www.simonandschuster.com/books/The-Wright-Brothers/David-McCullough/9781476728759

Wright Brothers, Wikipedia, https://en.wikipedia.org/wiki/Wright_brothers

The Wright Brothers- Inventing a Flying Machine, Smithsonian National Air and Space Museum, https://airandspace.si.edu/exhibitions/wright-brothers/online/fly/1903/

BOOKS OF THE TIMES; Genius and Eccentricity Of the Wright Brothers, New York Times, Herbert Mitgang, Aug. 5, 1989, https://www.nytimes.com/1989/08/05/books/books-of-the-times-genius-and-eccentricity-of-the-wright-brothers.html.

Wing Warping, Wikipedia, https://en.wikipedia.org/wiki/Wing_warping.

Einstein

The True Story Behind Einstein’s Quirky Tongue Photo, Oliver G. Alvar, January 31, 2022, https://culturacolectiva.com/history/albert-einstein-tongue-photo-true-story-behind/

The Year Of Albert Einstein, Richard Panek, Smithsonian Magazine, June 2005, https://www.smithsonianmag.com/science-nature/the-year-of-albert-einstein-75841381/.

Einstein: His Life and Universe, Walter Isaacson, https://www.goodreads.com/book/show/10884.Einstein

Einstein 1905: The Standard of Greatness, John S. Rigdon, https://physicstoday.scitation.org/doi/10.1063/1.2195321

The Light-Beam Rider, Walter Issacson, The New York Times, October 30, 2015, https://www.nytimes.com/2015/11/01/opinion/sunday/the-light-beam-rider.html.

Einstein’s Philosophy of Science, Stanford Encyclopedia of Philosophy, February 11, 2004, https://plato.stanford.edu/entries/einstein-philscience/.

How Einstein Learned Physics, Scott H. Young, https://www.scotthyoung.com/blog/2017/03/16/how-einstein-learned-physics/.

When Einstein Tilted at Windmills, Amanda Gefter, November 28, 2016, Nautilus Magazine, https://nautil.us/when-einstein-tilted-at-windmills-5497/.

‘God Plays Dice with the Universe,’ Einstein Writes in Letter About His Qualms with Quantum Theory, Mindy Weisberger, Livescience, June 12, 2019, https://www.livescience.com/65697-einstein-letters-quantum-physics.html.

Albert Einstein: “Imagination Is More Important Than Knowledge,” Jeff Nilsson, The Saturday Evening Post, March 20, 2010, https://www.saturdayeveningpost.com/2010/03/imagination-important-knowledge/.

Einstein’s Escapes, American Museum of Natural History, https://www.amnh.org/exhibitions/einstein/life-and-times/einstein-s-escapes.

Jane Goodall

Jane Goodall, National Geographic Society, https://www.nationalgeographic.org/article/jane-goodall/.

Jane Goodall: The Way She Saw the World Changed the World, Jane Goodall Institute, https://janegoodall.org/our-story/our-legacy-of-science/.

How Jane Goodall Made a Scientific Breakthrough Without a College Degree, Jennifer Larson, Time Magazine, July 14, 2015, https://time.com/3949985/jane-goodall-college-history/.

Jane Goodall: How She Redefined Mankind, Henry Nicholls, BBC.com, https://www.bbc.com/future/article/20140331-the-woman-who-redefined-mankind.

Jane Goodall Biography, Mary Bagley, March 28, 2014, LiveScience, https://www.livescience.com/44469-jane-goodall.html.

Jane Goodall on the Simple Steps We Should All Take to Save the Planet, Zoe Ruffner, Vogue, October 14, 2020, https://www.vogue.com/article/jane-goodall-sustainability-interview.

0 Comments